Neural networks are artificial intelligence (AI) models inspired by the human brain. They are capable of learning from data without being explicitly programmed. This makes them ideal for tasks such as speech recognition, image classification, and natural language processing. NNs are powerful tools for machine learning, pattern recognition, and computer vision. In this article, I will explain what they are, their types, their origination, why they’re useful, and how they work.

Table of Contents

What are neural networks?

A neural network is a sub-field of machine learning that focuses on simulating human NNs. These networks are inspired by the way neurons connect to each other in our brains. Neurons receive information from other neurons and pass that information onto other neurons. This process continues until the neuron reaches its destination. Artificial neural networks work similarly, except they do not have any real biological counterparts. Instead, these networks consist of many interconnected nodes called artificial neurons. Each node receives input from other nodes, processes that input, then sends the output back out to other nodes

What are artificial neural networks (ANNs)?

Artificial neural networks are computational models inspired by the way the brain learns. These models consist of interconnected nodes that pass information between them. Nodes may have several different types of connections, including excitatory and inhibitory. Excitatory connections cause the node to become active, while inhibitory connections prevent the node from becoming active. Inhibitory connections are often referred to as negative weights, whereas excitatory connections are positive weights.

Topology of artificial neural networks

The topology of a neural network describes how the neurons are connected, and it is crucial to the operation and learning of the network. Artificial neurons are the building blocks of an artificial neural network, which collaborates to address problems. A basic neural network is made up of three layers input layer, an output layer, and a hidden layer. Artificial neural network topologies can be divided into two categories.

- Feedforward ANN (FFANN): This type of ANN consists of an input, output, and one or more layers of neurons. The flow of information is unidirectional. The input layer is used to send information to the hidden layer, and then information sends to the output layer without feedback loops. In FFANN, no feedback loops exist and their inputs and outputs are fixed. See figure:

- Feed backward ANN (FBANN): This type of ANN has the same mechanism as FFANN, but output goes back into the previous layer to attain the best and most efficient results. They are commonly seen in content addressable memory. See figure:

An assortment of feed-forward and feedback network architecture adopted from Jain and Mao, 1996, is shown below:

Sources: Anil K. Jain and Jianchang Mao and K. Mohiuddin. Artificial Neural Networks: A Tutorial, IEEE Computer. 1996, 29, 31-44.

The working mechanism of Neural Networks

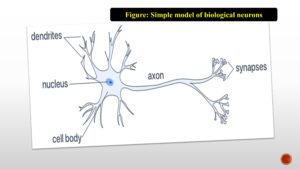

The inspiration for neural network architecture comes from the human brain. A network of neurons joined by axons and dendrites are referred to as a biological neural network. Synapses form the connections between neurons. The cell body (also known as the soma) the dendrites, and the axon are the three main components that make up a neuron. The biological neural networks can be understood by the given figure:

-

Dendrites: These branch structures extend away from the cell body. The signals from neighboring neurons are picked up by dendrites, and they are then sent to the other neurons via the axon.

-

Cell body (Soma): A nucleus, rough endoplasmic reticulum, and other cellular components are found in the cell body, or soma.

-

Axon: The axon’s terminating terminal has a synapse that connects it to the dendrite. The output signal is carried along the length of the axon as electric impulses. One axon exists in each neuron. Axon’s act as a domino effect, sending impulses from one neuron to another.

Do you know?

“Artificial neural networks’ dendrites serve as inputs, whereas cell nucleus represents as nodes, synapses serve as weights, and axons serve as outputs”.

Artificial neural networks (ANNs), also known as neural networks (NNs), are computer architectures that draw inspiration from the biological neural networks seen in brains. An intelligent computer system is made up of ANNs, which are a group of basic nodes called Neurons connected in a convoluted way. A simple schematic representation of artificial neural networks can be seen in the below figure:

The components of artificial neural networks could be defined as:

-

Neurons: The smallest and most fundamental unit of a deep neural network is known as an artificial neuron. Three elements make up a neuron input connection, core, and output connections. The neuron receives an input, analyses it, sends the result through an activation function, and then produces the desired result as output.

- Weight (W): The most crucial element in transforming an input into an effect on the output is weight. A weight is multiplied by the input to create the output in a manner like a slope in linear regression. Weights are numerical parameters that specify how much each neuron influences the others. Here (figure), the neuron receives the values X1, X2, X3,……, and Xn as input through the appropriate input connections. Each connection has a weight value assigned to it. w1, w2, w3,……, wn

- Bias: Bias is an additional connection that is the constant to the neuron, which helps the model can suit the given data as best as possible. In other words, bias provides the flexibility to perform at one’s best.

-

Activation function: A neuron’s activity status is determined by the activation function. The activation function’s objective is to make a neuron’s output non-linear. A crucial part of an artificial neural network is its activation function. The brain’s activity serves as inspiration for activation functions in a neural network. Different types of common activation functions will be elaborated on in the next article.

Types of Neural Networks (NNs)

Artificial neural networks come in a variety of forms, each with specific advantages. There are five basic types of neural networks: PNNs, MLPNN, FFNN, RNNs, and CNNs, as can be seen from the below figure.

By analyzing the way that information moves from the input node to the output node, artificial neural networks can be categorized. Here are a few instances:

- Perceptron neural networks (PNNs): One of the earliest and most basic representations of a neuron is the perceptron. Because it has just two layers (input and output), PNN is often referred to as a single-layer neural network. PNN has no hidden layer.

- Multilayer perceptron (MLPNNs): The term “Multilayer Perceptron” refers to a fully connected multi-layer neural network (MLP). It contains three layers, one of which is a hidden layer. It is referred to as a deep ANN if it has more than one hidden layer. MLP is a common illustration of a feedforward artificial neural network. MLPNN is frequently used in computer vision applications, social network data reduction, and data prediction systems.

- Feedforward neural networks (FFNNs): FFNN is one of the most basic kinds of artificial neural networks. The data travels through the various input nodes in a feedforward neural network before arriving at the output node. Computer vision and face recognition technologies both use feedforward neural networks. Feedforward networks are like the way humans learn. They can perform well at tasks that require pattern recognition and classification.

- Convolutional neural networks (CNNs): Convolutional neural networks (CNNs) are a specific type of deep neural network that was first developed in the 1980s. CNNs contain input, convolution, pooling, fully connected, and output layers, respectively. CNN is a deep learning network architecture that derives its knowledge directly from data. CNNs are very helpful for recognizing objects, classes, and categories in photos by looking for patterns in the images. They can be quite useful for categorizing signals, time series, and audio data. Convolutional neural networks are like visual perception in humans. They can recognize patterns in images and videos.

- Recurrent neural networks (RNNs): Recurrent neural networks (RNNs) are neural networks that operate over time series data. RNNs were originally created to model temporal sequences. An example of a sequence would be the words in a sentence. In order to properly learn the meaning of these words, the RNN must understand the context of the entire sentence. Recurrent neural networks are like the memory function of the human brain. They can remember past events and use that knowledge to make predictions about future events.

Application of neural networks

Neural networks are useful for performing various tasks, including speech recognition, image analysis, and natural language processing. They are also commonly used in robotics and autonomous vehicles.

Conclusion

We must understand something to learn it correctly, allowing us to utilize the knowledge we learn from this in the future. To do this, we must research the “Fundamentals” of everything. This article provides a quick explanation of ANN. This article enlightens the overview to understand neural networks. To digitally imitate the human brain, artificial neural networks are developed. These networks can be used to create the next generation of computers and are already being utilized for complicated analysis in a variety of sectors, including engineering and medicine. They are now employed for complicated studies in a variety of disciplines, from engineering to medicine. This article enlightens the overview to understand neural networks.

FAQ

Question: What is a neural network in simple words?

Answer: A neural network is an artificial intelligence strategy for teaching computers to analyze data in a manner inspired by the human brain.

Question: Do humans have a neural network?

Answer: The human brain contains a biological neural network with billions of connections.

Question: How many types of NN are there?

Answer: Neural networks can be divided into three main categories: classification, sequence learning, and function approximation.

Question: Is CNN a type of ANN?

Answer: It is one of several kinds of artificial neural networks.

Question: Is RNN a type of ANN?

Answer: RNN is a type of ANN that works with sequential or time series data.

Question: What is deep learning?

Answer: Deep learning is a subset of ML, that is essentially a three- or more-layered neural network.

1 thought on “AI 2023: What is the neural network? An overview || Simply explained.”